I just want to share the image with you as it is one of my favorites this year. I accidentally deleted a similar one (with different finish) I posted a few weeks ago during managing my gallery. Many thanks for viewing.

Analytics and strategy help us navigate the fog of war, but don’t necessarily make us responsible or wise.

The ascendance of analytics and its importance in strategy today is truly remarkable. One only needs to admire the seemingly simple act of a Google search to marvel at how analytics is affecting our ability to adapt and learn.

Yet with this rapid increase in instrumental power, more and more people are being left behind. The concentration of power and wealth into the hands of a few individuals and corporations has worrying implications for equality, liberty, and our relationship with truth. Our ability to collectively deal with the great challenges of our time (e.g. climate change) is hampered by algorithms which introduce rage and division into our political discourse.

Business strategy for a handful of technology companies has been staggeringly successful, especially in the last two decades. But what impact has this success had on human and life grand strategy? With the incredible opportunities technology has opened up to us, how are we managing the longer term threats of powerful technology to human flourishing and the common good?

The wise strategy for life and humanity is an ongoing pursuit. Trying to expand our caring while reducing threats is a challenge that spans all generations. In this series of articles, I will be exploring how we can pursue responsible strategy.

- Pursuing Responsible Analytics (this article)

- From Strategy to Grand Strategy

- How Ethics Informs Strategy

- How We Can Pursue Responsible Strategy

Pursuing Responsible Analytics

Analytics helps us answer questions about the uncertainty and complexity of our world by finding meaningful patterns in data. One of the ways we perform analytics is by building models – simplified versions of reality – which produce predictions to our questions about the world. With analytics we test these approximations of reality until we have sufficiently worked out the dynamics or reduced the risks of a problem to a satisfactory level.

Models

Models involve simple to complex computation and are always limited by a finite amount of processing power. To answer ‘what will the weather be tomorrow?’ a human brain or a computer constructs a model consisting of statistics on some data points to make a prediction. It is necessary that the data is restricted to relevant observations like clouds and current temperature – as opposed to irrelevant data like the current price of Bitcoin.

For models to work effectively and efficiently, modellers must narrow the scope of what our models can see to only the information that is potentially helpful. Reducing the variable space makes modeling possible. Yet here lies the potential danger – what variables does the modeller choose to leave out, and what are the consequences of ignoring that data?

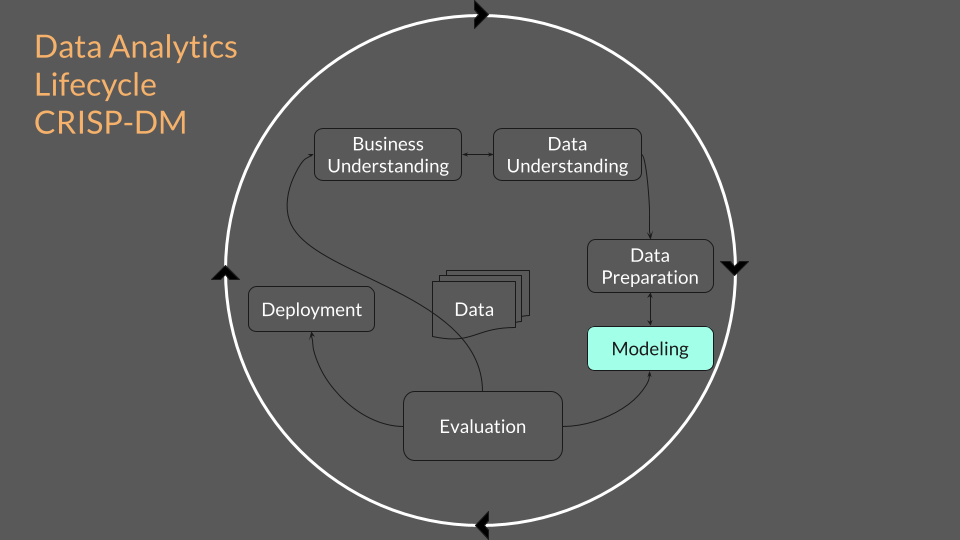

One of the classic representations of the lifecycle of analytics is called the CRISP-DM (“Cross-Industry Standard Process for Data Mining”).

This lifecycle acknowledges that the modeling of data is only one part of the process. Understanding, preparation, evaluation, deployment, and ongoing oversight of the data are crucial to successfully answering an analytics question.

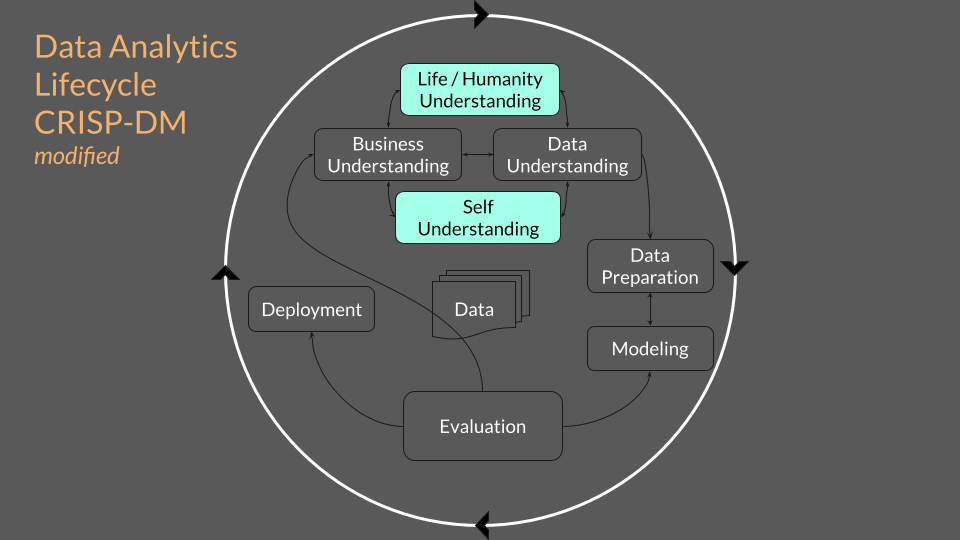

Even if we do all the steps of the CRISP-DM, business and data understanding alone is not enough for responsible analytics at scale. Analytics at scale can generate immense value for users and incredible returns for shareholders, but also shocking threats to the society and environment that it is embedded within.

To engage with analytics responsibly, considerations must be made of how the business model interacts with broader systems such as population health, political systems, justice, and planetary health. If a business limits its understanding to a customer-centric or purely economic horizon, it runs the risk of ignoring the waste, suffering, or injustice it may be causing in the systems that support it.

Analytics runs on the limited datasets given by its creator. This creates models which are heavily influenced by the creator’s values, which in turn are heavily influenced by the organizational and societal culture they reside within. If we want to pursue responsible analytics, it becomes critical to not just look outwards, but also inwards at ourselves and our organization’s values, caring, and motivations.

Unintended Consequences

Social media algorithms are a poignant example of a powerful piece of analytics with highly problematic consequences. Human behavioral data is extracted from our clicks, likes, and comments. Artificial intelligence models process this data to predict our behavior. Our news feeds then fill up with personally tailored content determined by analytics, imperceptibly manipulating what we see to achieve maximal exposure to the highest bidding party. An unintended consequence of this type of analytics is that in order to keep your attention and keep you consuming tailored content, everybody in your newsfeed sounds like you. This echo-chamber effect has led to an epidemic of polarization that is making it far harder to have civil discourse at a time where we need to navigate big problems together.

Mathematical models themselves are not the danger. What a model sees and does not see is up to the modeller. When a model starts affecting its context at large scales, it is up to the modeller to feedback the consequences into the analytical process. Humans, not models, are responsible for the threats that powerful models produce. Human modellers have values, ignorance, standards, and levels of caring – all of which are heavily influenced by the organization and culture they reside within.

Responsible Analytics

Analytics is a learning process that helps us navigate solutions to problems. Modern computing power, mass data, and mathematical models have given analytics great power – revealing both incredible opportunities but also incredible threats. To wield this power in a way that supports greater caring while reducing unintentional threats requires a deeper understanding of ourselves and the human and life systems we are a part of.

There are other learning processes that can help us explore broader horizons wisely. Strategy is a way of learning that tries to consider multiple models, paths, and time frames. In the next article in this series, I will discuss how strategy may help us pursue wise decision making.

References

Zuboff, S. (2019). “Surveillance Capitalism.” Profile Books.

Low, K. (2016). The Human Venture Institute mapbook (16th edition). Action Studies Institute.

Cheng, K. (2014). Sunrise in fog [Photograph]. Flickr. https://www.flickr.com/photos/6×7/25879810377/

Provost, F., & Fawcett., T. (2014). “Data science for business: What you need to know about data mining and data-analytic thinking.” O’Reilly Media.

Suggested Resources

- Surveillance Capitalism (2019) by Shoshana Zuboff

- Weapons of Math Destruction (2016) by Cathy O’Neill

- Your Undivided Attention – podcast by the Center for Humane Technology

- Humane Tech Design Guide by the Center for Humane Technology

- Other presentations and resources by Nick Kalogirou

- Recommended books by the Human Venture Institute

- Human Venture Meta Framework Map 66: Biosocial Horizon Lines – boundaries of caring

Nick Kalogirou is a Human Venture Leadership alumnus who likes to put technology, ethics, and communication together into understandable and useful stories. This article and other insights can be found on his website, nickkal.com